Earlier today we were asked by a CIO about our feelings towards integration in education. Where is it going? What common challenges do we see? What types of solutions do we see emerging as winners? As we got to talking one theme came up that inspired this article is how the traditional source of truth is, well, not.

But let’s back up for just a moment, what exactly is a “source of truth” within an information system? For efficient systems to operate all data should be modeled and stored only once. Whenever that piece of data is then required, it should be referenced from only that single location within the database structure. Likewise when such data needs to be manipulated and updated, you would only operate upon this single data store. Otherwise duplication in the data means duplication in maintaining it.

OK so that’s nice in theory but rarely practical or even possible in enterprise situations. Let’s say you support both student information and learning management systems. User names, course data, and grades traverse both. Then throw in to the mix a separate authentication server. Do you use specialized, non-integrated assessment tools? What about the records that instructors keep on their own computers in an Excel file? Teaching assistants who email grades to the instructor to first copy into said spreadsheet? A department admin who receives them from the instructor for entry back in to the ERP? Printed transcripts? The reality is that there isn’t anything even close to a source of truth for something as critical as student grades.

So why is this happening? Isn’t more technology available on campuses today than ever before? Isn’t the promise of these technologies to solve these sorts of inefficient processes?

The problem is not the existence of technologies that solve these challenges. In fact the availability of so many powerful and specialized platforms is actually what causes this problem in the first place. Today IT administrators are presented with a cornucopia of solutions to choose from, each focusing on a very thin slice of the enterprise. Student portfolios, faculty performance, health records, facilities management, recruiting with social media; each area now has very capable, affordable, and often hosted (i.e. data off-site) options that far surpass anything that can be built in-house or provided by a single commercial source. Gone are the days when the ERP system was the monolithic be-all, end-all solution.

While this sort of specialization is ultimately beneficial, it has very profound effects on data and how it must be handled. In a word it’s integration.

Let’s consider one of our clients, Intellidemia, who focuses on managing course syllabi. To power their tool they require user, course, and registration data from the SIS. Credentials should be verified against an identity server. Syllabi are typically presented directly in the LMS. And since a syllabus often contains a course description, they look to synchronize this content with a catalog solution.

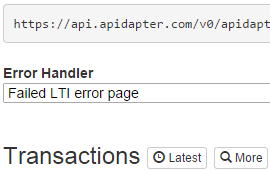

In the past it was likely that such data would be duplicated as the “source of truth” is used to populate other external systems. A report is downloaded from the SIS to be manually manipulated and uploaded. Authentication is mixed, some users being checked against an LDAP server, others using SSO or LTI, and a third group maintaining an entirely separate password internally in the system, not to mention a firewall hole needs to be opened. The LMS is being transitioned to another vendor so not only are identifiers changing, but it’s even more complicated as the two systems need to be run in tandem during the conversion year. Description data is in an in-house catalog tool within the Office of Academic Affairs while outcomes are handled at the department level with non-standard Excel files. So while Intellidemia supports APIs to streamline such integration and workflow, the inherent and messy nature of the data, legacy systems and processes involved, and support for standard protocols (or even consistent naming for that matter) makes the clean synchronization and management of such data a near impossibility.

All that changes with Apidapter. Scripts that are already written to extract similar SIS data for neighboring systems can be processed and mapped to the new syllabus system API. Authentication takes place centrally so that every user, regardless of the back-end identity system used, can seamlessly access the syllabus solution. Plus this is achieved without opening another firewall hole which would otherwise have increased security risk. Adapters transform data on the fly so multiple LMSs can be used simultaneously regardless of required parameters and identifier construction. Finally outcome data can first be pushed to the catalog system and then together with descriptions can be synchronized with all syllabus templates, all without customization to any in-house or third party tools.

Without tight and effective integration, and consideration for what the “true” source of truth is for ever more granular data elements, the value of all campus tools is greatly diminished. It’s not to say that no source of truth exists today, but it’s spread out over so many fantastic albeit independent solutions that proper, efficient, and sustainable integration becomes the name of the game. In short Apidapter makes it easy to ensure that the proper systems remain the sources of truth for their domain.